Talking about AI in everyday, non-specialist conversations is fraught with category mistakes. This is mainly because everyday citizens don’t know how AI practitioners speak about their work. In this article, I’ll help non-specialists understand the AI conversation, both what’s being said and why.

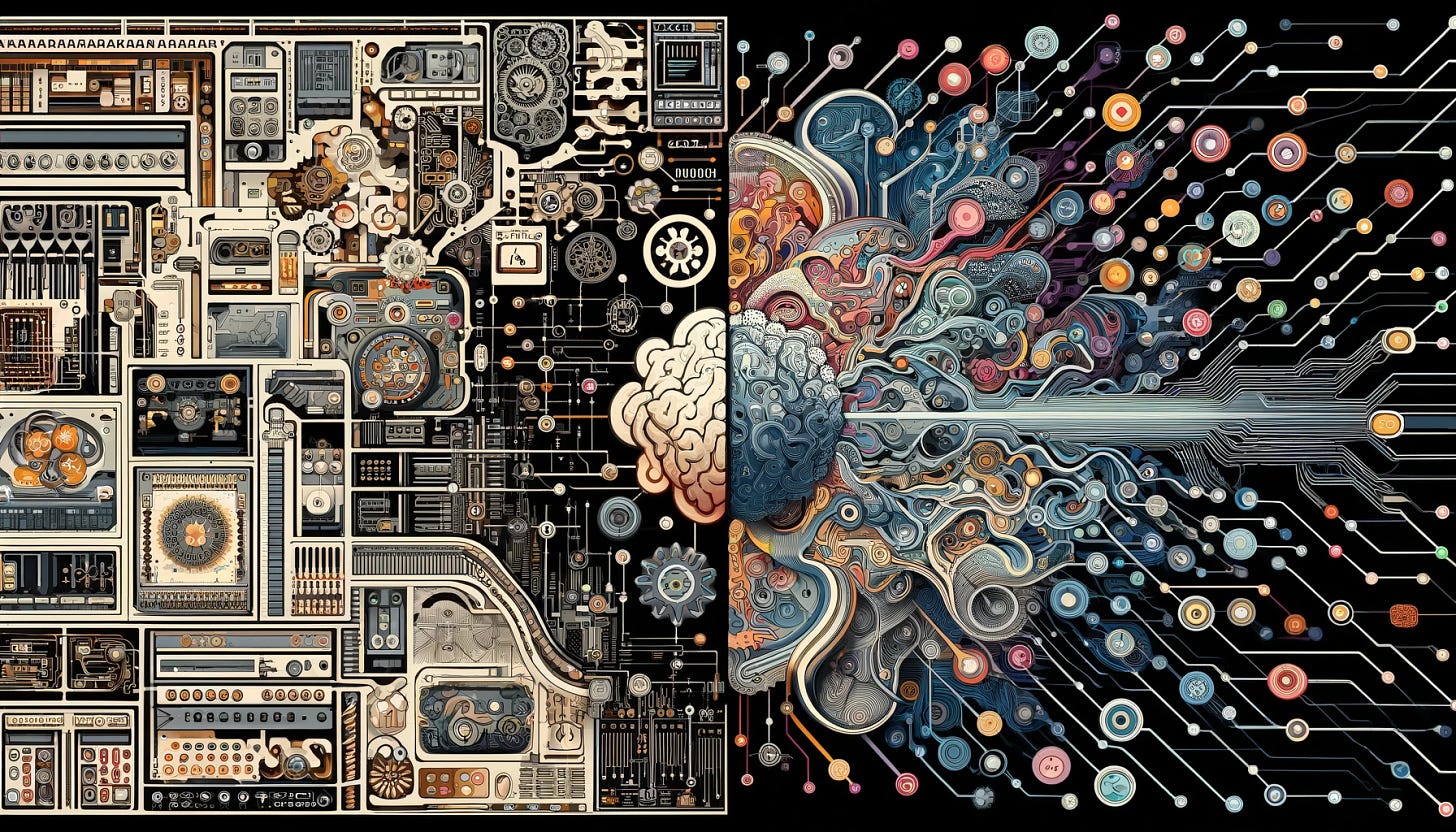

I’m an AI skeptic. I do not believe that artificial general intelligence (to be defined later) is possible. AI is broadly of two types: symbolic or neural. The usual typology is a confused muddle of differences, differences of application, of method, and of algorithmic complexity. There’s no need to make it that complicated. To keep it simple, I’ll use the two categories above.

History of AI

As usual in this newsletter, we begin with the basic history. If you want to enter a conversation, you have to listen first. My perennial advice when tackling a subject is to pretend you’re invited to a cocktail party filled with people you don’t know. You wouldn’t just start talking about what interests you. You’d listen first. After you understand the many conversations going on, you can begin to contribute. You'll make a fool of yourself if you just blurt out what comes to mind. Be polite and listen, first. The history of a question is how you enter into the conversation.

One way to outline the development of AI practice is to look at the major accomplishments of Turing Award winners. AI researchers selected them as contributing the most important ideas to the discipline. In parentheses are Turing Award dates.

Marvin Minsky (1969). As an undergraduate, Minsky, along with Dean Edmonds, defined and built the first neural computing system. They built on the previous work of Warren McCulloch and Walter Pitts. McCulloch and Pitts showed that neural networks could compute any theoretically computable function. Minsky actually built such a neural network. He called these systems perceptrons, simple computational systems that emulate some characteristics of neurons. This was very cutting-edge. Later, when Minsky submitted his work on neural networks to his Princeton mathematics Ph.D. committee, they were troubled. Should neural networks be considered mathematics?” Famously, von Neumann told the committee, “If it isn’t now, it will be someday.” Minsly inaugurated the development of Probabilistic AI.

John McCarthy (1971). McCarthy is my hero. His famous 1958 MIT AI Memo No. 1 defined the high-level programming language Lisp (for List Processing). Lisp became the dominant AI programming language for the next 40 years. Arguably, it still is. In his famous paper, Programs and Common Sense, McCarthy proposed a system beyond perceptrons. He called it the Advice Taker. The Advice Taker would maintain a symbolic representation of knowledge as specified axioms. The Advice Taker could infer from the axioms. The axioms could be updated by the Advice Taker itself, thus allowing the system to gain new competence autonomously. McCarthy essentially invented the category I call Logic AI. Minsky briefly collaborated with McCarthy, but they parted ways when Minsky pursued a non-logic, perceptron-based program quite different than McCarthy’s symbolic approach. In 1959, medical student Richard M. Friedberg introduced machine evolution, now called genetic programming. The idea was to try random solutions to a problem, and mute the more successful ones until one arrived at an answer that was “good enough.” The idea was right, but the methods of mutation were not efficient, and the computing hardware was slow. Few good results were obtained. Genetic algorithms are the area that I work in, today. Thanks, Mr. Friedman.

Keep reading with a 7-day free trial

Subscribe to Jeff Younger Show to keep reading this post and get 7 days of free access to the full post archives.